Instant SEO Checker + Report in 30 Seconds

Enter the URL of any landing page to see how optimized it is for one keyword or phrase…

What’s In My Instant SEO Score + Report?

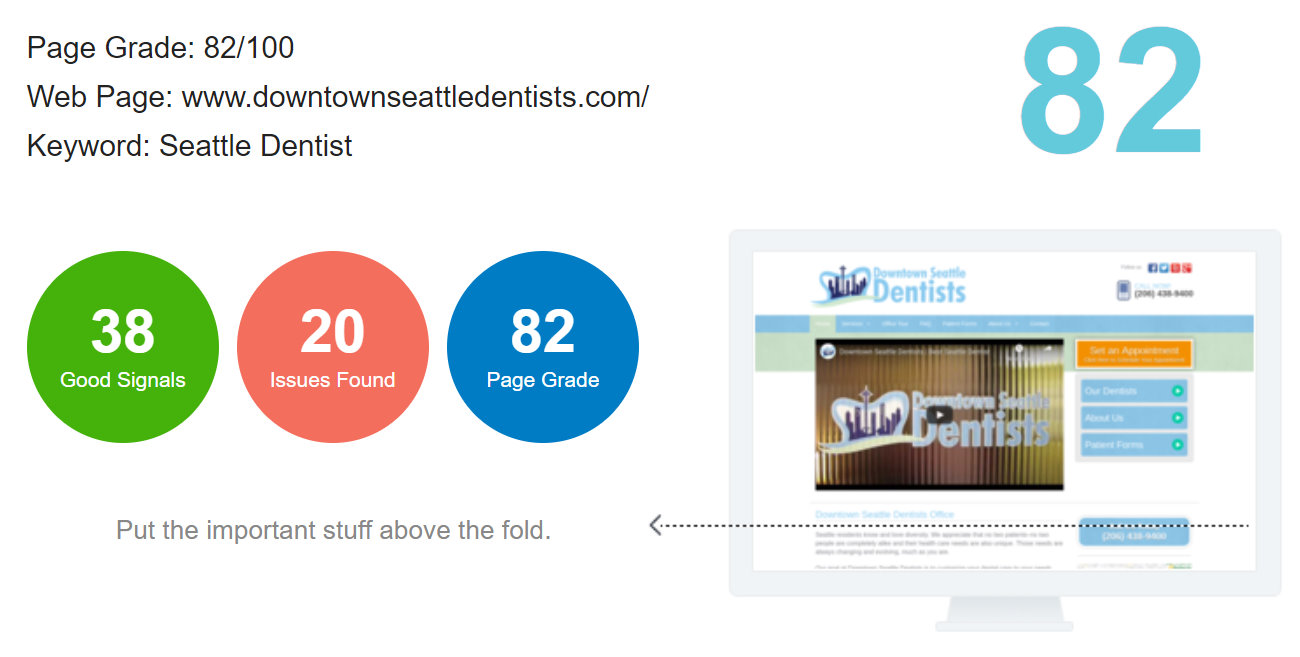

SEO Checker Overview

This section outlines your overall SEO score between 0 and 100 based on the 58 SEO elements checked. In addition, you can see the number of “good signals” and “issues found”.

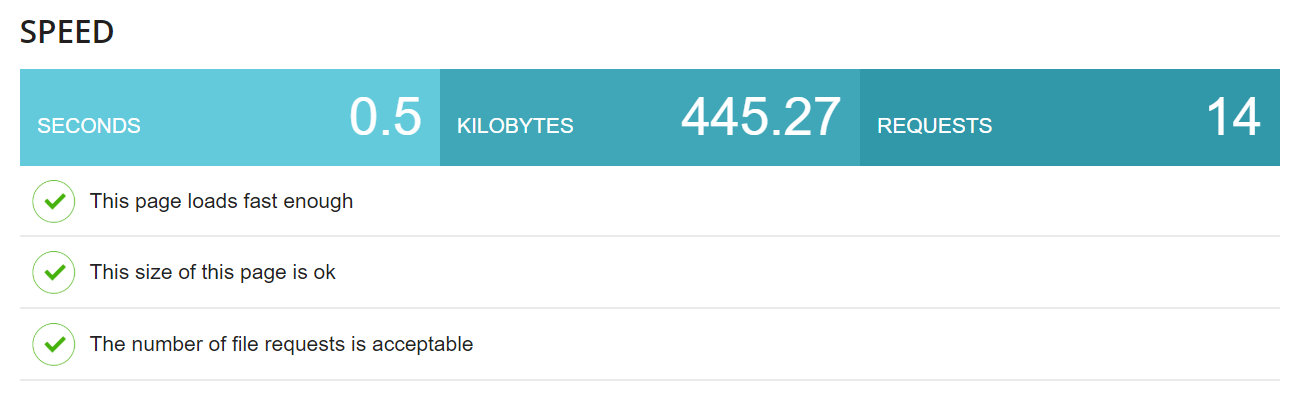

Website Speed

Website speed is an increasingly important aspect of SEO. This section checks your page load speed, page size and the number of file requests.

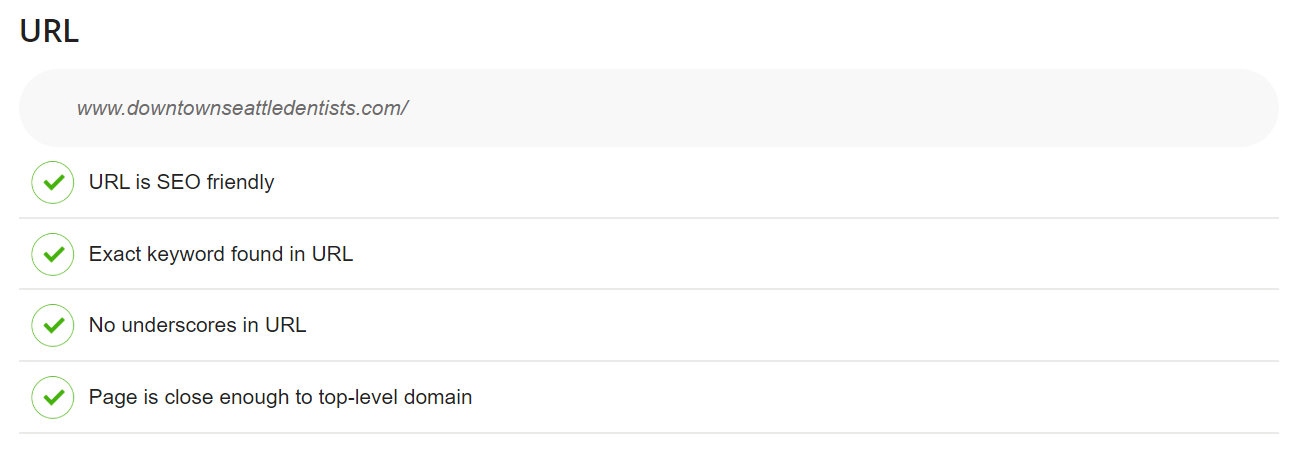

URL

This section checks if your URL is SEO friendly, does the keyword appear in the domain, are there any underscores and if the page is close enough to the top-level domain.

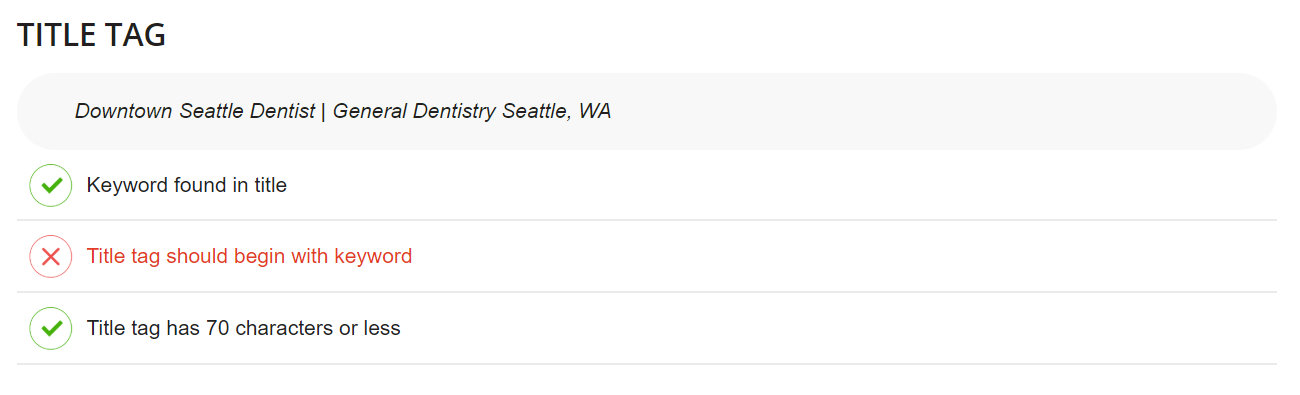

Title Tag

Your title tag is one of the most important SEO elements. It helps both users and search engine know what the page is about. This sections checks if your keyword is found in the title tag, if the title tag starts with the keyword and if the your title tags is less 70 characters or less.

Meta Description

Your meta description is an important SEO element that is pulled into SERP listings to help both users and search engine understand what your page is about. This section checks if your keyword is included in your meta description and if the meta description is 160 characters or less.

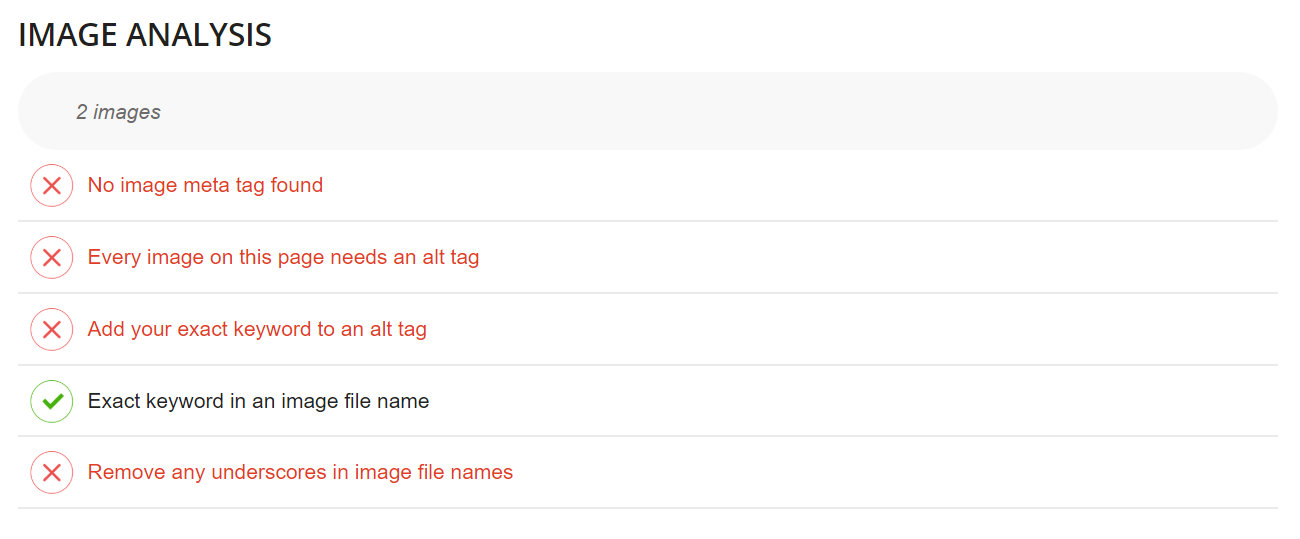

Image Analysis

Google and other search engines need image meta data to determine what the images on your web page are about. This section checks your image meta tags, alt tags and file names to ensure they are optimized for SEO.

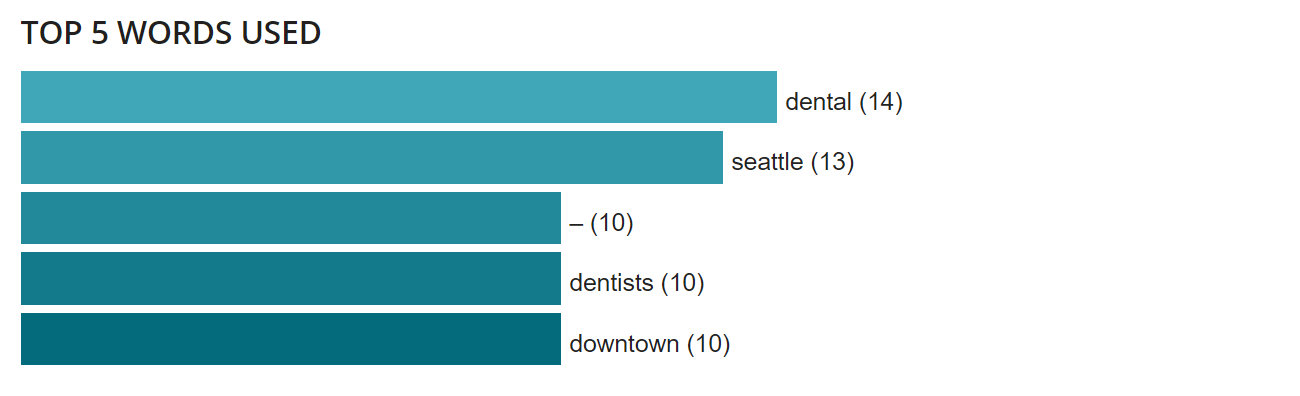

Top 5 Keywords Used

Content and keyword density is an indicator to Google for what the page is about. This section identifies which 5 keywords are used the most within your content.

Heading Tags

Headers are an important element for both users and search engines to explain your web pages key points and content sections. This section checks to see if your keywords are included in the important headers.

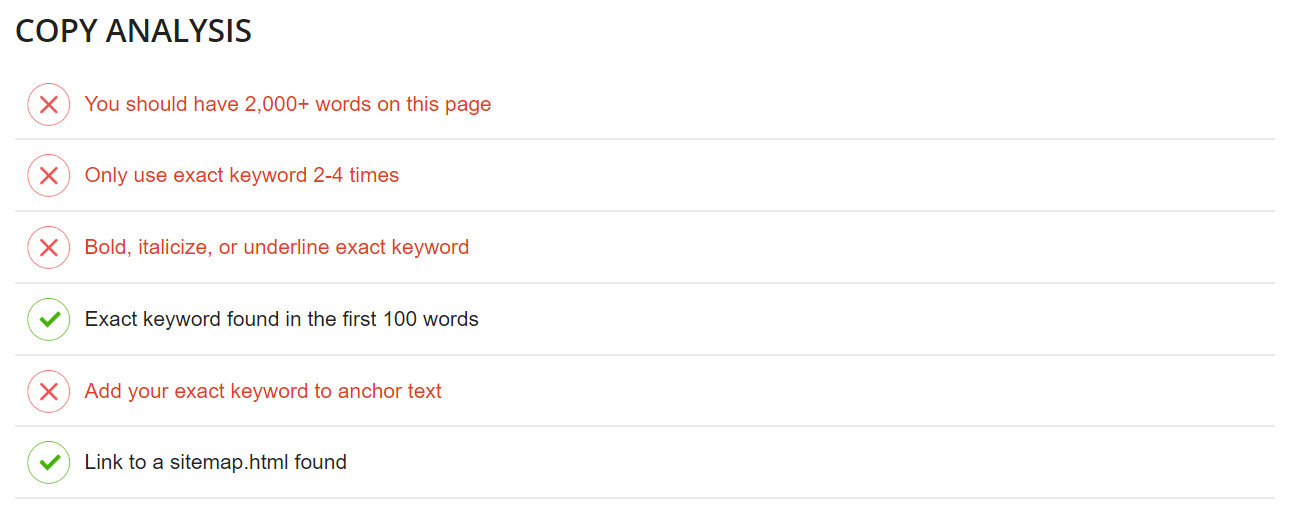

Copy Analysis

Content is king. Your web page copy is definitely one of the most important aspects of your pages SEO. This section checks several important SEO elements to make sure your copy follows best SEO practices including: word count, frequency, emphasize, priority and anchor text.

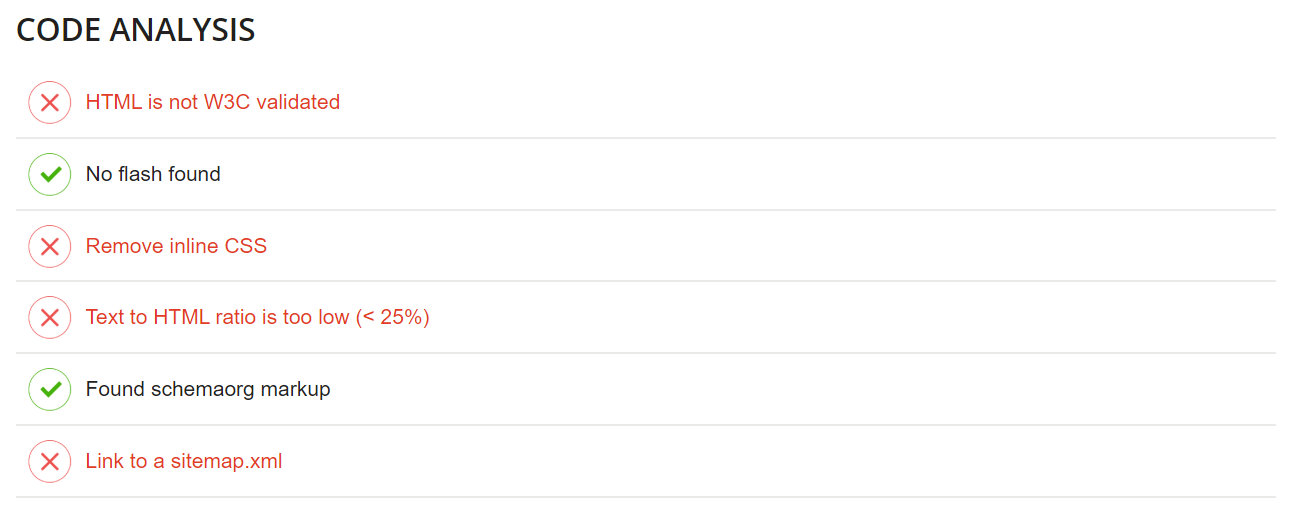

Code Analysis

Google and other search engines read code. Several important standards need to be followed to ensure your pages are being properly read and indexed. This section checks HTML W3C validation, flash, css, text to HTML ratios, schema and sitemaps.

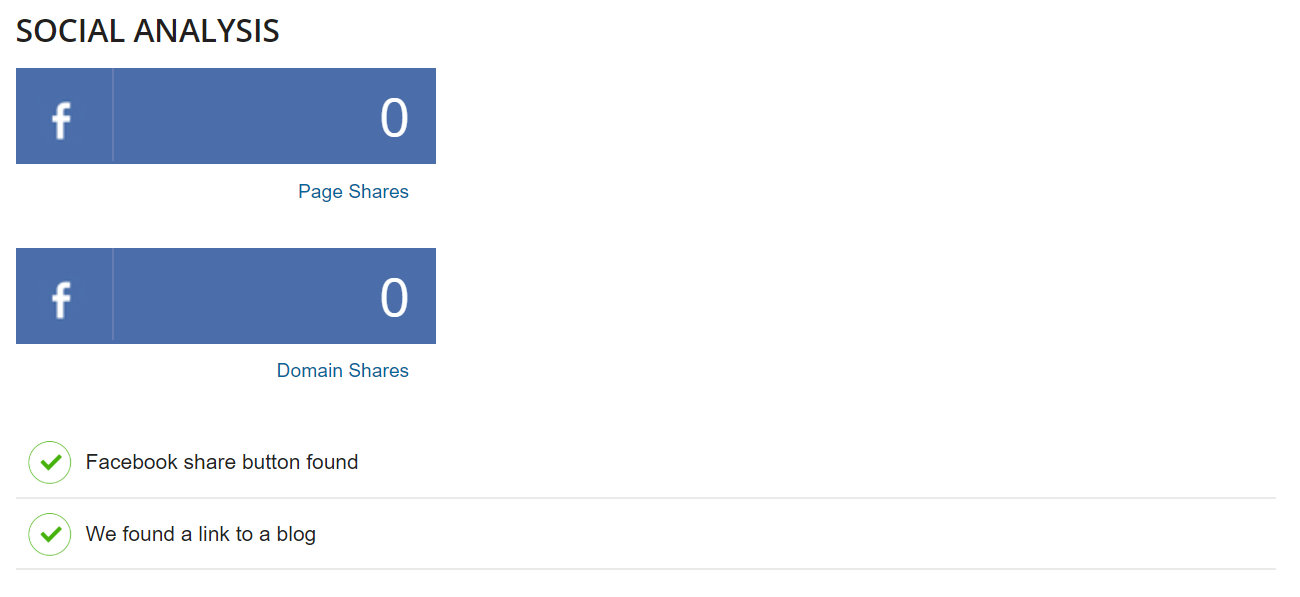

Social Analysis

Shares on social media have become an increasingly important SEO indicator. This section checks Facebook for shares as well as the existence of a Facebook share button and active blog.

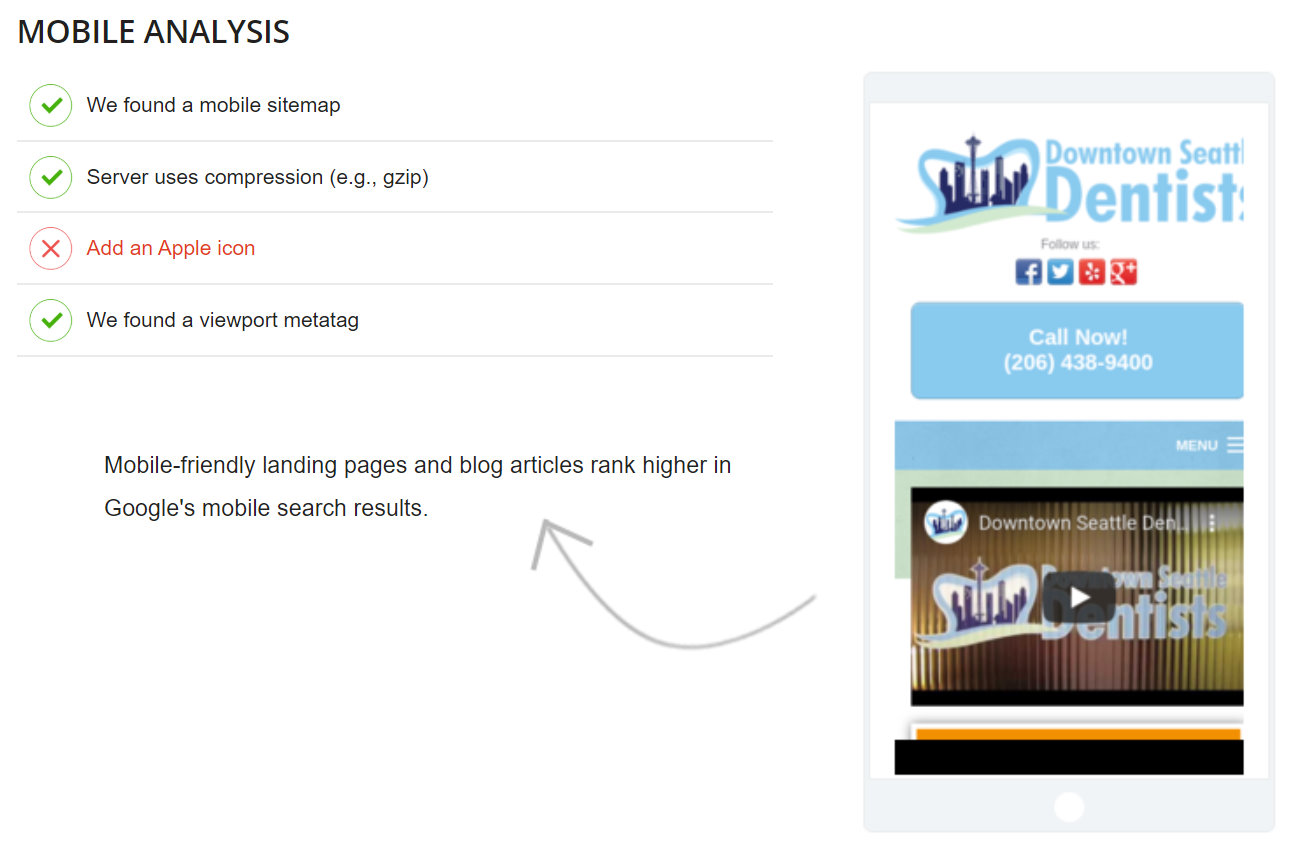

Mobile Analysis

More than 50% of Google’s 5 billion+ daily searches come from mobile devices. Thus, Google has adopted a mobile-first policy. This means all aspects of the mobile version of your website are more important than the desktop version.

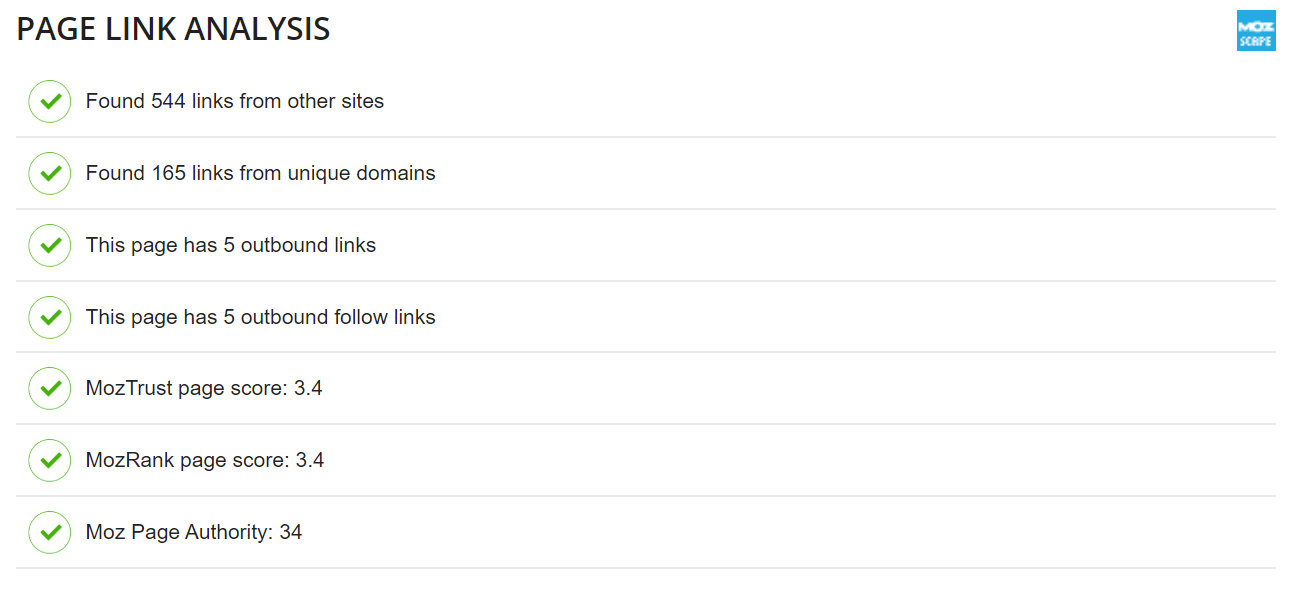

Page Link Analysis

Backlinks are still a primary SEO indicator to Google and other search engine regarding the authority and trust of your website. This section checks both inbound and outbound links as well as the MozTrust, MozRank and Moz Page Authority scores of your web page.

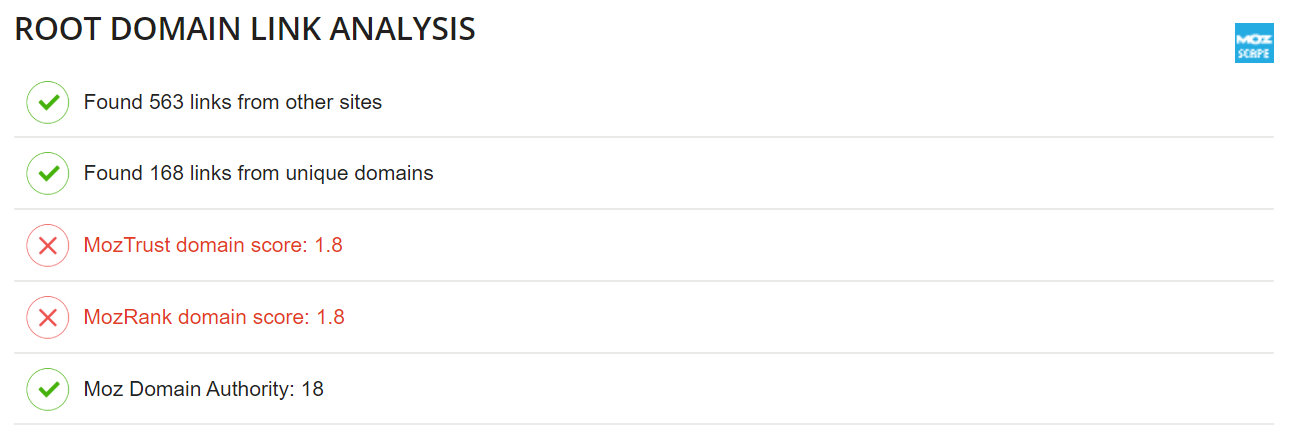

Root Domain Link Analysis

The homepage of your website or root domain is usually the most authoritative page on your website. This section checks the links and MozTrust, MozRank and Moz Domain Authority scores of your homepage or root domain.

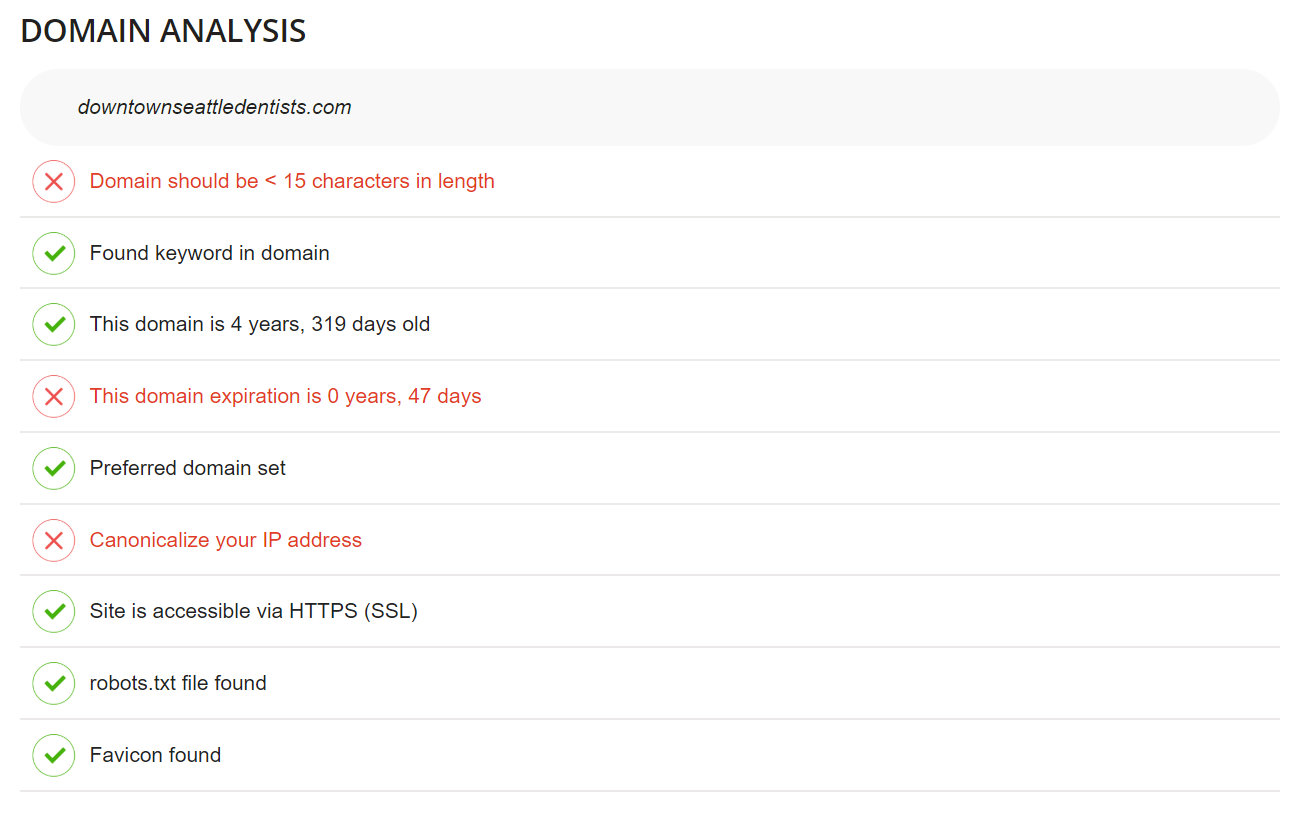

Domain Analysis

This section checks several domain factors including: domain length, age of domain, expiration date, canonicalization, SSL, robots.txt and favicon.

SEO Checker FAQs

SEO Checkers Compared

| SEO Checker | Checks Keyword | Doesn’t Check Keyword | Elements Checked |

|---|---|---|---|

| JEMSU Instant SEO Checker | Checks Keyword | 63 | |

| JEMSU Site Auditor | Doesn’t Check Keyword | 45 | |

| SEObility SEO Check | Doesn’t Check Keyword | 62 | |

| Neil Patel SEO Analyzer | Doesn’t Check Keyword | ||

| SEOSiteCheckUp | Doesn’t Check Keyword | 48 | |

| Woorank | Doesn’t Check Keyword | 62 | |

| SEOptimer | Doesn’t Check Keyword | 49 | |

| SEMrush | Doesn’t Check Keyword | ||

| SEOtesteronline | Doesn’t Check Keyword |